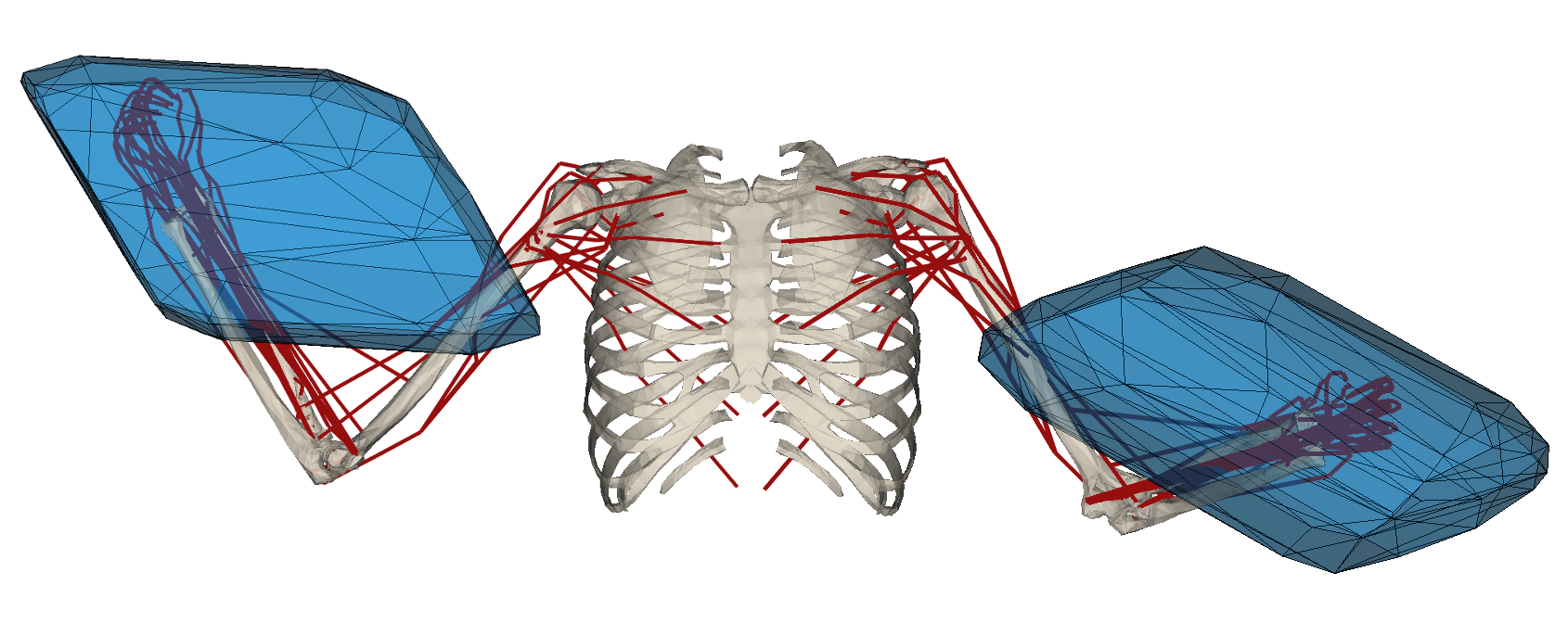

The LiChIE project (funded by BPI) aims to design a constellation of mini-satellites for optical Earth observation. Among many other topics, this requires to rethink the way sattelites are being produced in order to ease this highly complex process. There is actually an unprecedented economical and societal demand for robots that can be used both as advanced and easily programmable tools for automatizing complex industrial operations in contexts where human expertise is a key factor to success and as assistive devices for alleviating the physical and cognitive stress induced by such industrial task. Unfortunately, the discrepancy between the expectations related to idealized versions of such systems and the actual abilities of existing so-called collaborative robots is large. Beyond the limitations of existing systems, especially from a safety point of view, there are very few methodological tools that can actually be used to quantify physical and cognitive stress. There is also a lack of formal approaches that can be used to quantify the contribution of collaborative robots to the realization of industrial tasks by expert operators. Of course, in the state-of-the-art, existing works in that domain do consider some aspects of the current state of the operator in order to propose an appropriate robot behaviour. One of their conceptual limitations is to consider an a priori defined human-robot collaboration scenario where the expertise of the human operator is of importance but limited to a single operation. The consideration of larger varieties of tasks is rarely considered and, when it is, only a strict separation of the tasks to be achieved by each member of the human-robot dyad is considered. In this project, we propose to develop a coupled model of human-robot physical abilities that does not make any a priori with respect to the type of assistance. This requires to develop a parameterisable generic model of the potential physical link and implied constraints between the human operator and the robot. This model should allow to describe the task to be achieved by the human alone or using a collaborative robot through different interaction modalities. Online simulation of these scenarios coupled with ergonomic and performance indicators should both allow for the discrete choice of the right assistance mode given the task currently being achieved as well as for the continuous modulation of the robot behaviour.

People involved:

- Antun Skuric (PhD Student)

- Vincent Padois (thesis advisor)

- David Daney (thesis advisor)